Comparison with HSSN

North Star is purpose-built for the Sonic SVM ecosystem. It evolves Sonic's own HSSN v1 architecture into a leaner, session-scoped execution primitive, and draws on the theoretical framework from the MagicBlock Ephemeral Rollups paper (arXiv:2311.02650). The differentiation is in execution model, security guarantees, and integration depth with Sonic SVM.

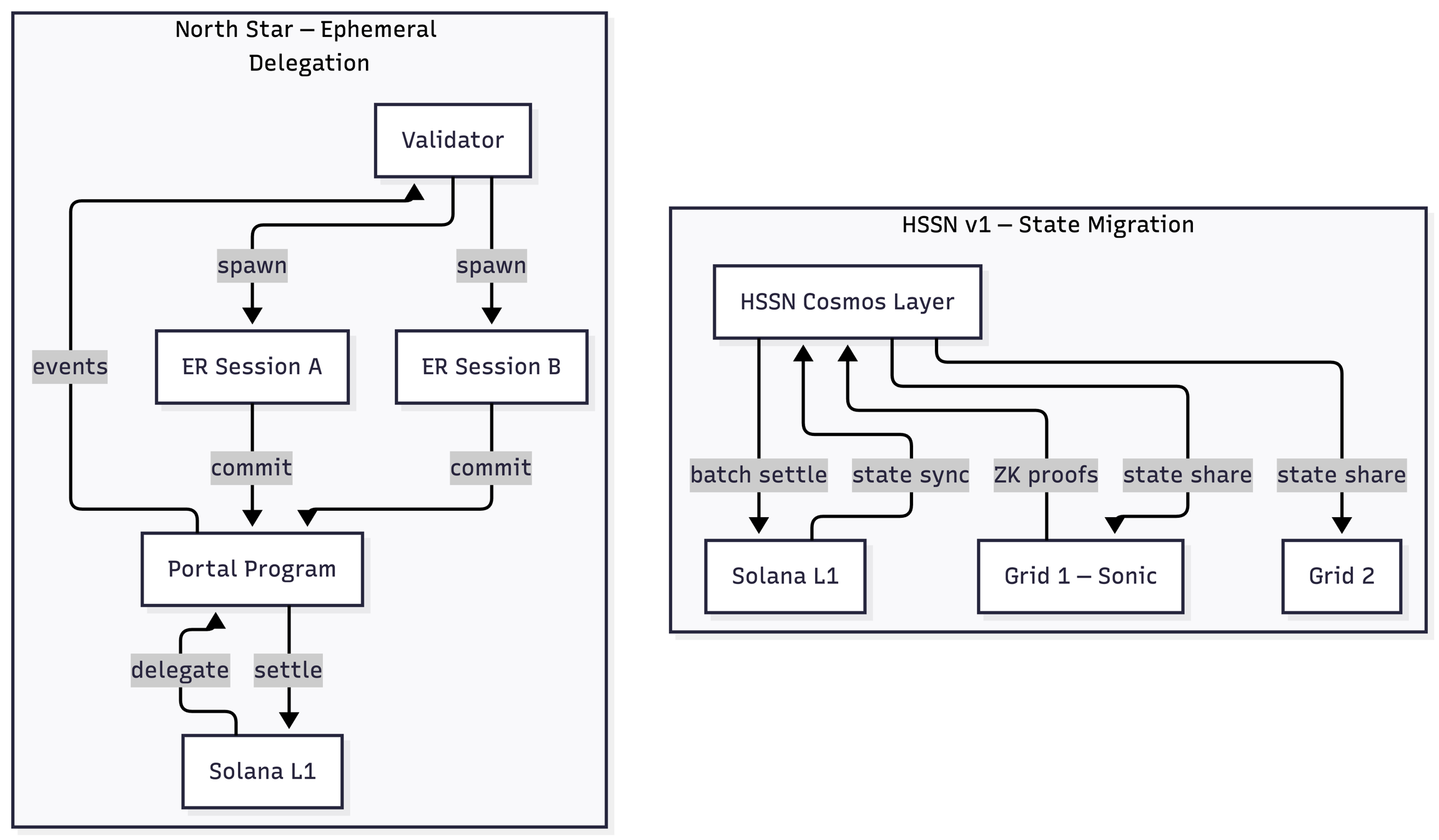

vs. HyperGrid Shared State Network (HSSN v1)

HSSN v1 was Sonic's first-generation approach to enabling cross-grid state sharing. It established the foundational idea of extending Solana's execution environment into specialized SVM grids — the same architectural instinct that drives North Star. North Star represents an evolution of this model: rather than sharing persistent state across grids via a coordination layer, it provisions dedicated, session-scoped execution that settles directly back to L1.

State Model

HSSN v1: Bidirectional state synchronization between Solana L1 ↔ HSSN coordination layer ↔ Grids. Write operations flow through an 11-step lock-sync-execute-sync-unlock pipeline.

North Star: Account delegation. Specific accounts are transferred to the Portal Program for the duration of a session. Write pipeline: 5 steps. Settlement is direct L1 → Portal → program.

Coordination Layer

HSSN v1: A Cosmos-based consensus layer (HSSN) sits between L1 and Grids. Enables cross-grid composability but introduces an additional consensus round (~6-7s BFT finality) and a separate validator set.

North Star: No intermediate coordination layer. The Portal Program on Solana L1 is the anchor. Security relies on L1 finality + the dispute game protocol, not an additional consensus mechanism.

Session Model

HSSN v1: Grids are persistent, long-running chains — designed for continuous shared state across users over time.

North Star: ERs are session-scoped — provisioned for a bounded workload and terminated when done. Sonic SVM provides the persistent environment; North Star adds on-demand burst capacity for time-bounded, high-intensity workloads. The two models are complementary, not competing.

Scalability

HSSN v1: Horizontal scaling via multiple Grids, with HSSN coordinating state across them. Throughput scales with Grid count; coordination overhead scales with shared-state complexity.

North Star: Embarrassingly parallel. Each ER is fully independent. 1,000 concurrent sessions = 1,000 isolated ERs with no coordination overhead.

📊 Summary: HSSN v1 optimized for persistent, cross-grid state sharing. North Star optimizes for isolated, session-scoped burst execution. North Star builds on the architectural foundation HSSN v1 established.

vs. MagicBlock Ephemeral Rollups

Security Model

MagicBlock: Relies on a trusted sequencer and MagicBlock operator infrastructure. Dispute resolution and fraud proofs are not yet in production — the current architecture trusts the ER operator to produce correct state transitions.

North Star: Implements a 3-layer security stack: (1) Dispute Game with O(log n) binary search bisection, (2) ZK Finality — a single-transaction ZK proof generated only when a dispute is found, and (3) Accelerated Challenges via Checkpoint Bonds every ~30 seconds, enabling challenge resolution in minutes instead of the typical 7-day optimistic rollup window.

Execution Environment

MagicBlock: General-purpose ER with 33+ Solana-compatible JSON-RPC methods, broad tooling for session keys, VRFs, oracles, and the BOLT ECS framework. Optimized for game development breadth.

North Star: Purpose-built SVM runtime focused on raw performance for latency-critical workloads: orderbook matching, real-time game state, and high-frequency DeFi. Specialized for depth in performance-critical paths.

Integration Model

MagicBlock: Requires programs to integrate the MagicBlock delegation SDK and opinionated developer tooling (BOLT framework, session keys as a first-class concept).

North Star: Minimal program modification required. The North Star SDK handles session management, delegation, and routing transparently. Existing Solana programs can be delegation-enabled with minimal code changes. The Router is stateless — if accounts aren't delegated, transactions fall through to L1 RPC.

Last updated